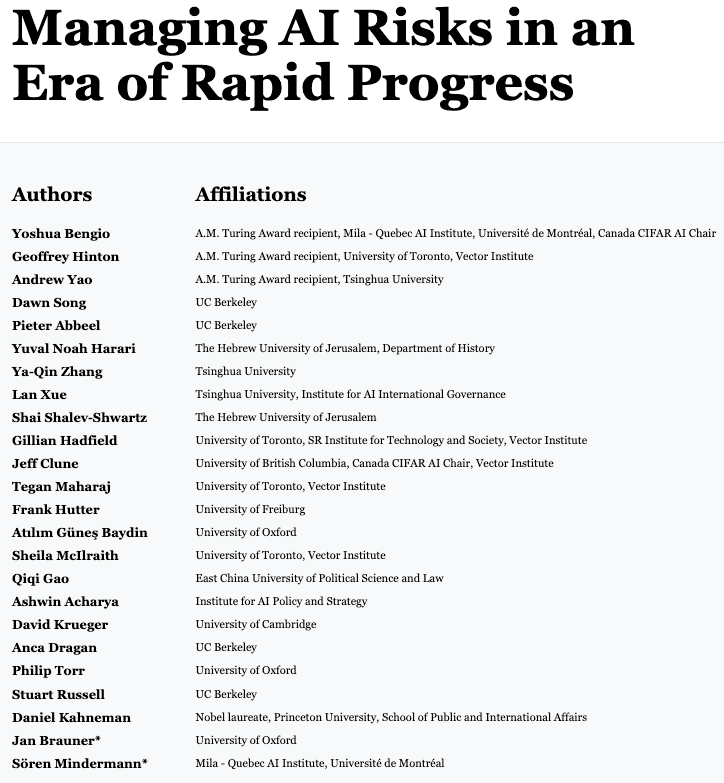

Managing AI Risks in an Era of Rapid Progress

Prominent AI researchers, including Geoffrey Hinton, Yoshua Bengio, Stuart Russell, and others, are urging the establishment of global regulations to ensure AI is used responsibly. They propose the creation of a supervisory body, similar to the Nuclear Energy Agency, to oversee (watchdog) the most advanced AI systems developed using high-end supercomputers. Concurrently, they recommend exempting smaller, low-risk AI models and academic studies from such regulations. This AI watchdog agency needs access to advanced AI systems before deployment to evaluate them for dangerous capabilities.

My takeaway

I’m up for such regulation as long as the small to mid-AI companies aren’t affected.

But, how would they differentiate between the smaller, low-risk AI models and the high-risk ones?

And how can we make countries that don’t trust each other agree on such regulations?

Moreover, how to enforce such regulations globally?

Would it be like the Climate Change Conferences where the world failed to secure a solid commitment?